Neural style transfer refers to the use of neural networks to apply the style of a style image to a content image.

(Most of the literature in neural style transfer refers to images, but recent research has explored the use of neural style transfer techniques to other domains.)

Examples

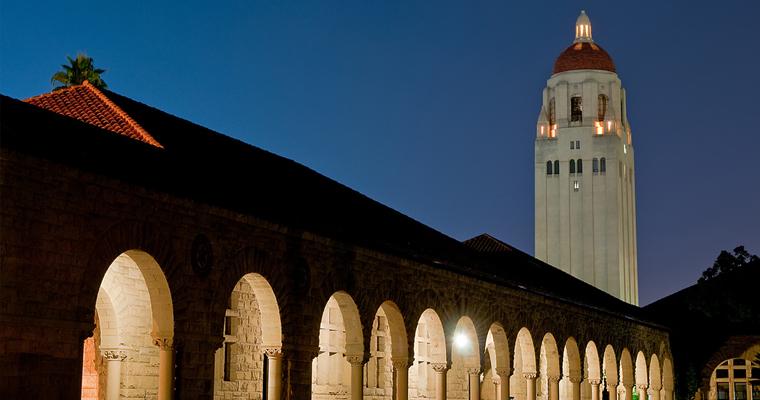

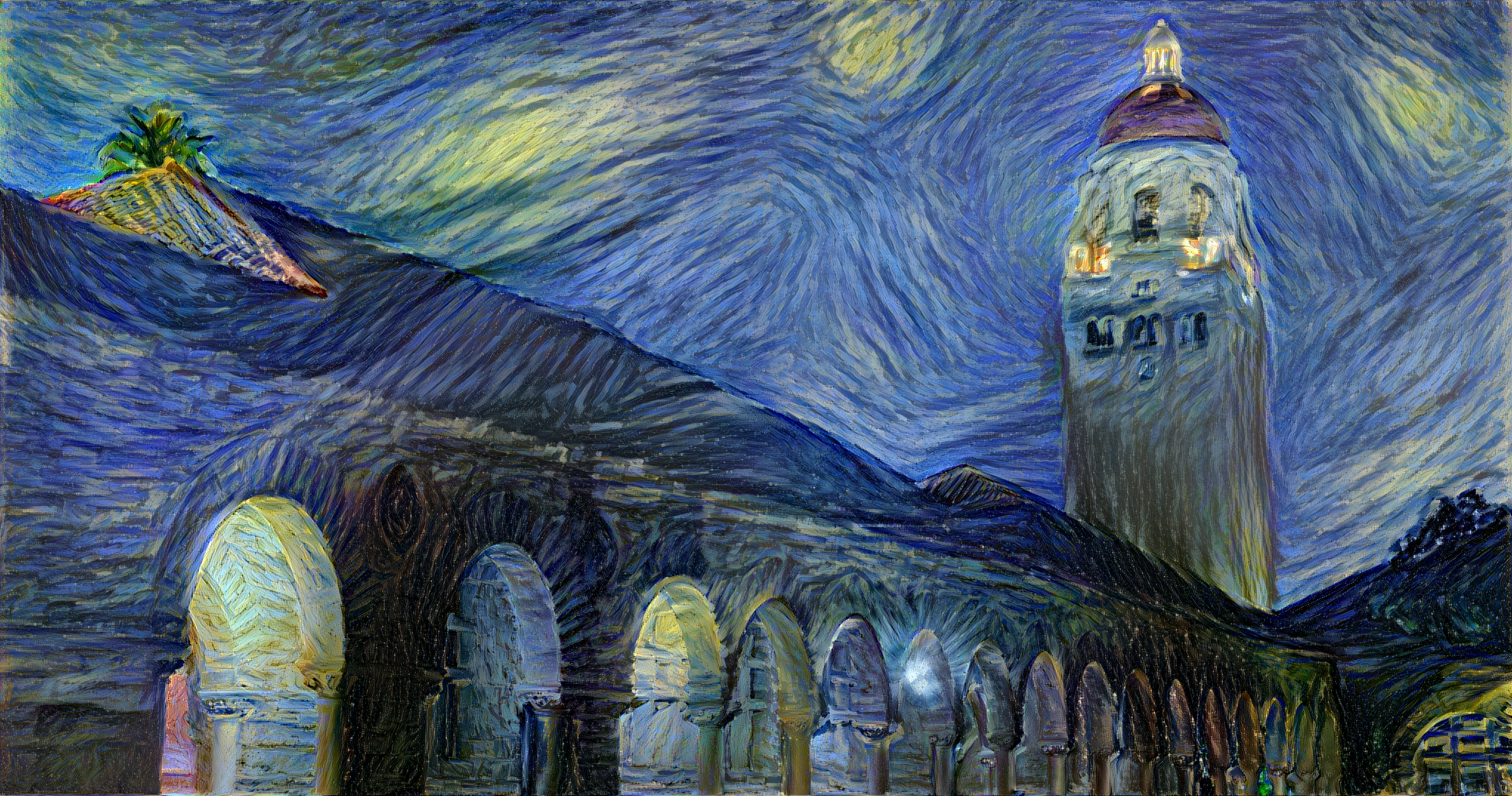

The below examples of neural style transfer are from Justin Johnson of Stanford University. The top left image is Vincent van Gogh’s The Starry Night and the top right image is a photograph of Stanford’s Hoover tower. The bottom image is generated by Justin Johnson using neural style transfer.

Top Left: Vincent Van Gogh’s famous artwork titled The Starry Night

Top Right: A photograph of Hoover Tower on the Stanford University campus

Bottom: A synthetic image generated by Justin Johnson that depicts Stanford University’s Hoover Tower using the style of Vincent van Gogh’s The Starry Night

History

In the 1990s, some computer science researchers began exploring non-photorealistic rendering, which offered ways to generate images inspired by the style and texture of specific artwork (such as oil paintings). However, these techniques were generally limited in the types of styles they could generate target images for.

This limitation began to disappear in 2015 with the publication of the paper A Neural Algorithm of Artistic Style. The paper’s authors demonstrated the use of convolutional neural networks to generate images inspired by many different styles.

Loss Function

Broadly, neural style transfer is the technique of using gradient descent to minimize the loss function over the following variables:

- \(x_c\) – our input content image. In the above example, this would be the photograph of Stanford University’s Hoover Tower.

- \(x_s\) – our style image. In the above example, this would be van Gogh’s The Starry Night.

- \(x\) – our generated image. In the above example, this is the generated image of Hoover Tower in the style of The Starry Night.

We intend to find a value of \(x\) that minimizes \(\alpha E_c(x, x_c) + \beta E_s(x, x_s)\) where:

- \(E_c(\cdot)\) is a function that compares the difference of content between \(x\) and \(x_c\).

- \(E_s(\cdot)\) is a function that compares the difference of style between \(x\) and \(x_s\).

- \(\alpha\) and \(\beta\) are weighting factors to balance whether the generated image favors accurate content or accurate style.